/Project Details

FashionMNIST Robustness Study

Built a reproducible PyTorch Lightning pipeline for six-class FashionMNIST classification, comparing CNN/MLP models and augmentation strategies.

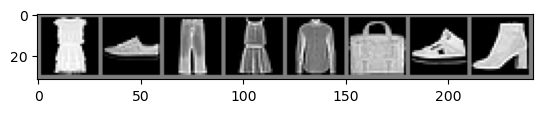

This project started as a compact computer vision pipeline for a restricted six-class version of FashionMNIST. I implemented a custom Dataset class that filters the original dataset, remaps labels, applies normalization, and optionally applies rotation-based augmentation.

The training workflow was built with PyTorch Lightning so that experiments could be reproduced with command-line flags for model type, learning rate, batch size, training, evaluation, checkpoint selection, and augmentation settings.

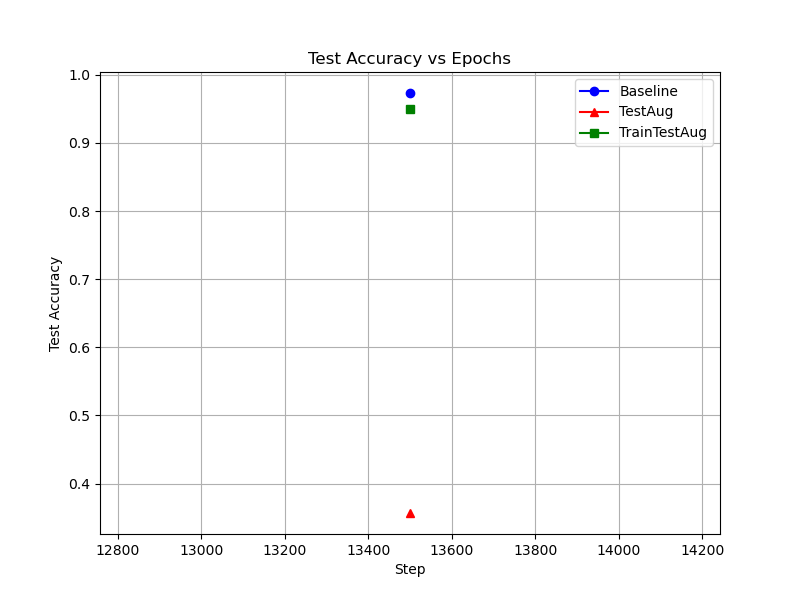

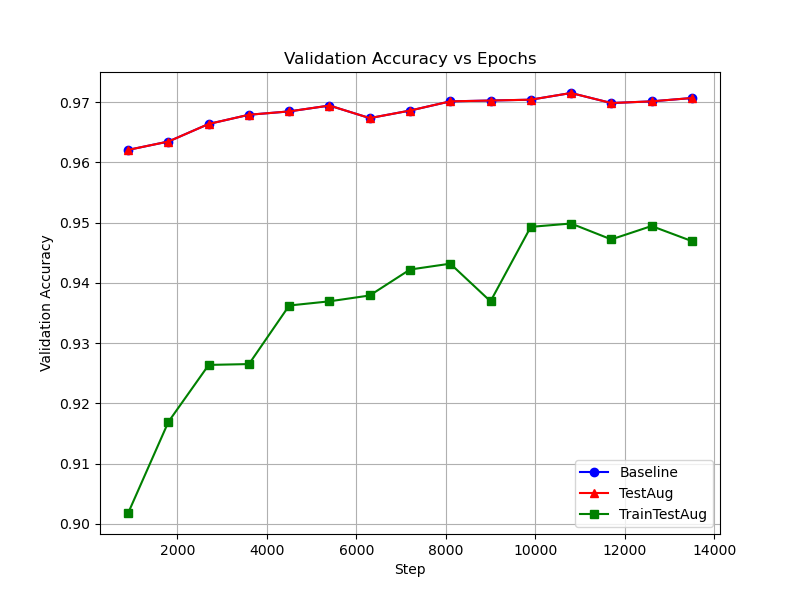

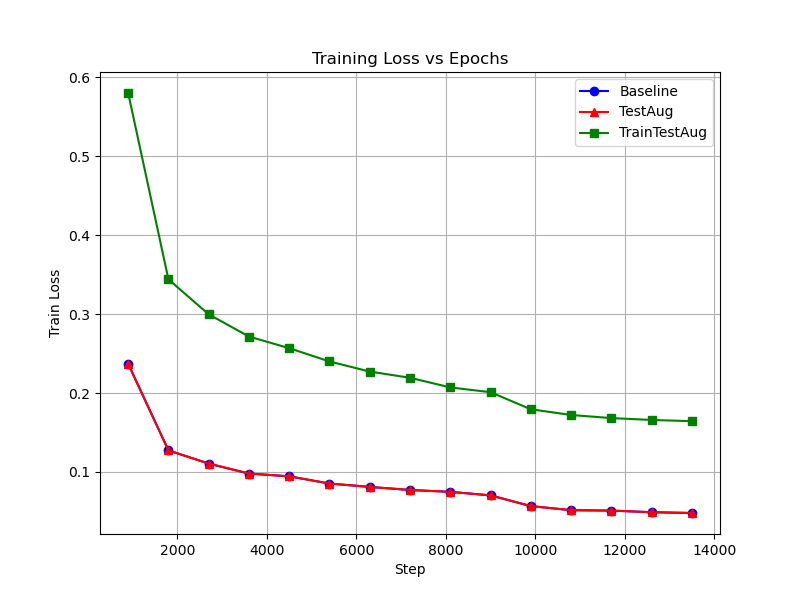

Beyond training a classifier, the interesting part was robustness: I tested what happens when the model sees rotated images at test time, then compared that with models trained using matching rotation augmentation.

Highlights

- Implemented CNN and MLP architectures for six-class FashionMNIST classification.

- Used PyTorch Lightning for training, validation, testing, early stopping, checkpointing, and TensorBoard logging.

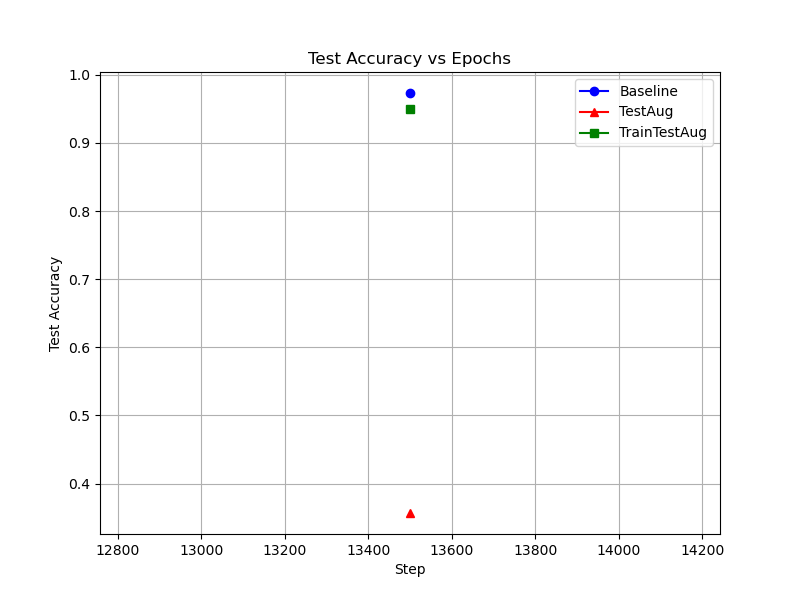

- Reached ~97.3% baseline test accuracy with the CNN model.

- Showed that unseen test-time rotations dropped accuracy to ~35.7%, while rotation-aware training recovered performance to ~95%.

- Observed that horizontal flips slightly hurt performance, reinforcing that augmentation should match the data domain.

Figures