/Project Details

EASIEST: AI-Based Autism Diagnosis Support with Web-Based Eye Tracking

A capstone computer engineering project: a web-based AI system that uses eye-tracking signals from a standard webcam to support autism screening.

EASIEST was my computer engineering capstone project. The goal was to develop a web-based AI system that supports autism screening by using eye-tracking signals collected from a standard computer camera. The motivation came from two practical constraints: traditional Autism Spectrum Disorder evaluation can be costly in both time and resources, and commercial eye-tracking hardware is often expensive and difficult to scale.

The core engineering idea was to move an eye-tracking-based ASD support system directly into the browser. Instead of depending on specialized devices, EASIEST combines WebGazer-style webcam eye tracking with a Flask-based web application, PostgreSQL storage, patient and test workflows, gaze-data processing, fixation filtering, feature generation, and machine-learning-based prediction mechanisms.

The project was positioned as one of the first examples of using web-based eye tracking for ASD screening support. While many AI systems attempt autism classification, the distinguishing contribution here is the browser-based gaze capture pipeline: patients complete visual tasks in the browser, gaze data is recorded in real time, and the signal is transformed into model-ready features.

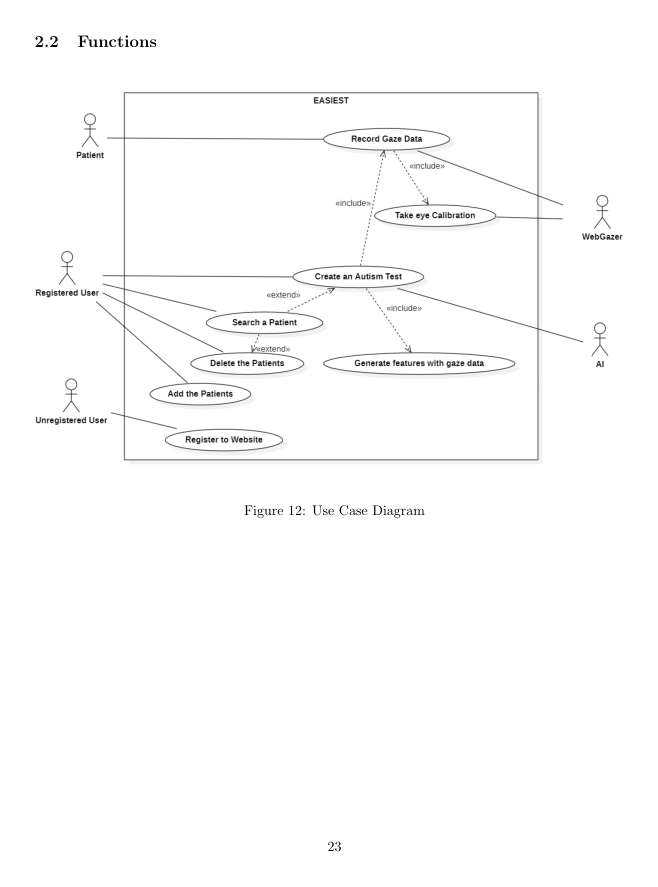

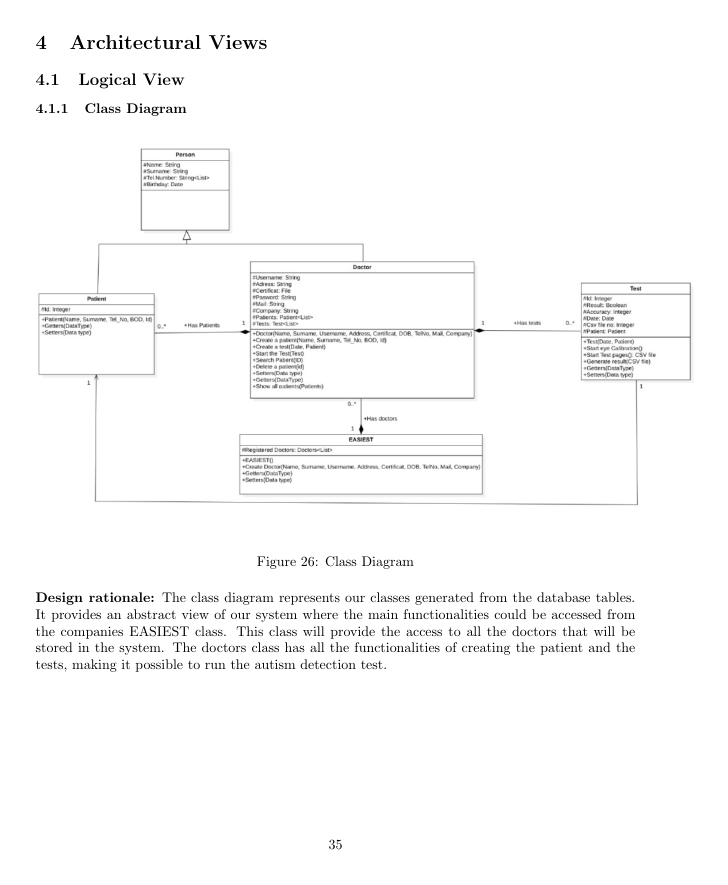

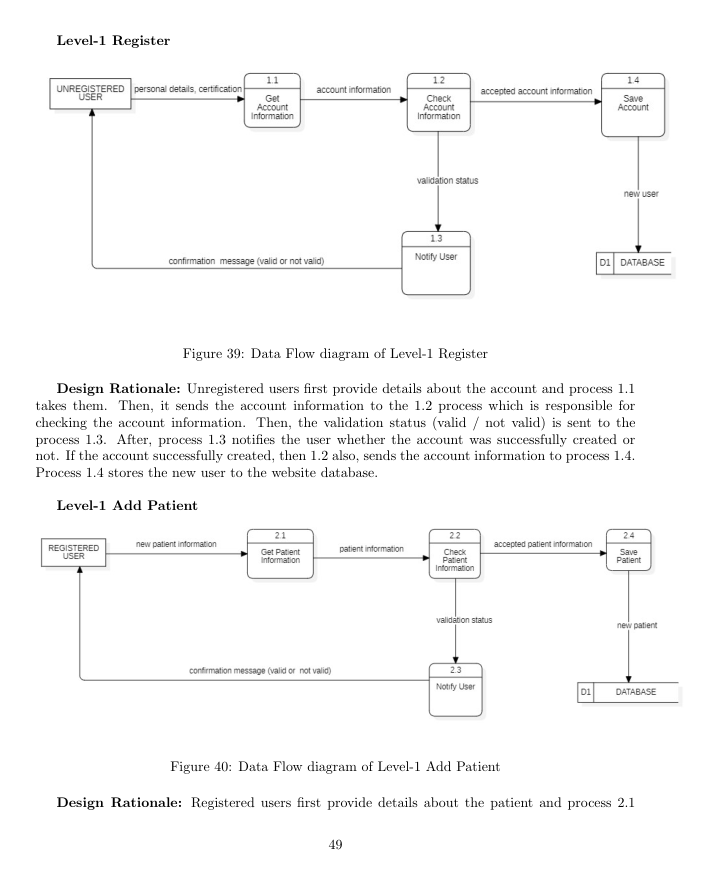

A large part of the project was not only model development but also full software engineering. The report includes use-case diagrams, sequence diagrams, activity diagrams, class diagrams, data-flow diagrams, database design, UI flows, backend and frontend implementation, Selenium integration tests, Pytest unit tests, and user-testing feedback gathered with a healthcare professional.

The clinical framing is essential: EASIEST is not presented as a standalone diagnostic tool that replaces clinicians, but as a supportive screening and decision-support system. The user feedback reinforced this point: eye-tracking data can provide useful quantitative evidence, but ASD diagnosis still requires broader clinical evaluation.

I am especially grateful to Assist. Prof. Dr. Sukru Eraslan and Prof. Dr. Yeliz Yesilada for supervising the project, supporting the research direction, and sharing datasets and domain knowledge that made this work possible.

Highlights

- Integrated webcam-based eye tracking into a browser-native ASD screening workflow instead of relying on commercial eye-tracking hardware.

- Designed patient, doctor, registration, calibration, gaze recording, feature generation, and AI prediction workflows.

- Implemented a Flask/PostgreSQL backend with SQLAlchemy models for patient and test management.

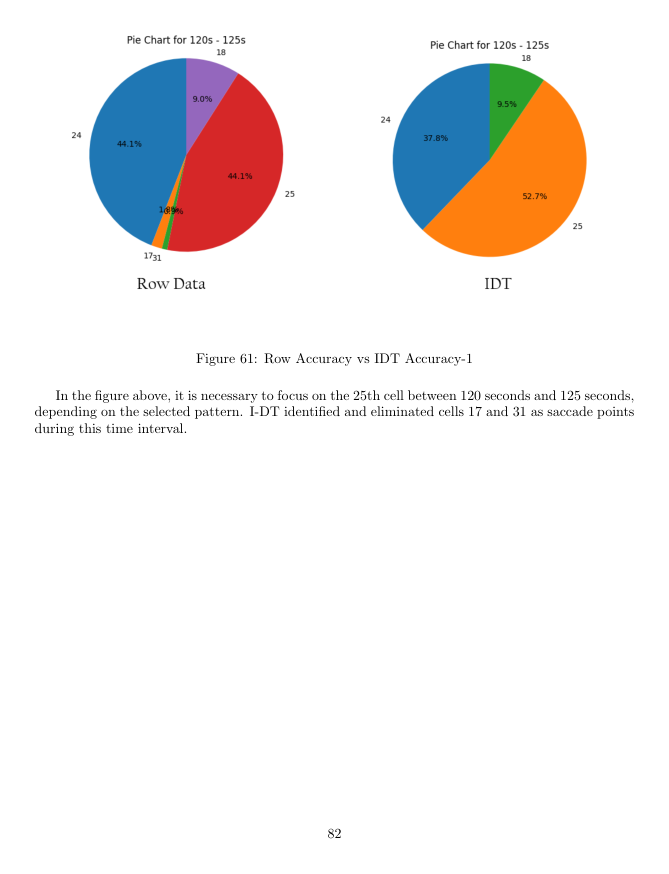

- Processed raw gaze points with fixation filtering, including I-DT-style logic to remove saccade points and improve feature quality.

- Documented the system with UML artifacts, data-flow diagrams, database design, UI flows, and implementation details.

- Validated the software stack with Selenium integration tests and Pytest unit tests for gaze-processing components.

- Positioned the system as supportive screening and decision support rather than clinical replacement.

Figures