/Project Details

Decision Tree Optimization for Eye-Tracking Features

Applied PrInDT-based decision tree optimization methods to eye-tracking features and compared them against GLM and standard conditional inference trees.

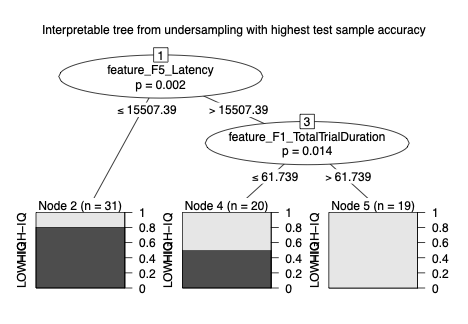

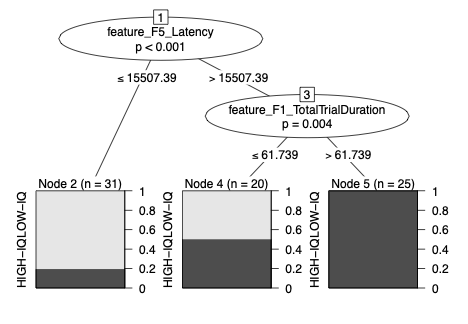

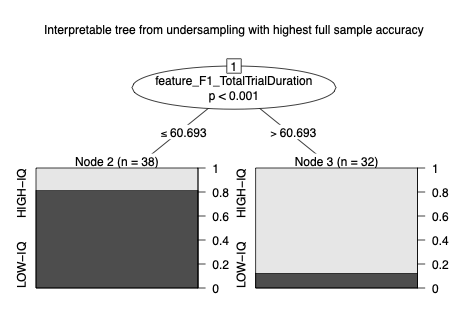

This project focused on decision tree optimization methods rather than black-box prediction. Using eye-tracking features extracted from cognitive task data, I compared a classical GLM baseline, a standard conditional inference tree, and PrInDT-based optimized tree methods.

The main goal was to test whether optimized and resampling-based decision tree frameworks could improve balanced classification performance while keeping the model interpretable. This matters because eye-tracking research often needs not only prediction, but also understandable decision rules.

The analysis used LOW-IQ and HIGH-IQ group labels, but the portfolio version is intentionally framed around methodology and feature-based model optimization rather than exposing raw research data. Raw data is not public due to the research context.

The work builds on the PrInDT family of decision tree optimization methods associated with Prof. Claus Weihs. I gratefully acknowledge Prof. Claus Weihs and the PrInDT framework, including functions such as PrInDT, PrInDTAll, PrInDTreg, PrInDTregAll, PrInDTMulev, and related R/Python implementations.

Highlights

- Compared GLM, standard ctree, PrInDT, RePrInDT, and OptPrInDT-style optimization workflows.

- Used eye-tracking features such as total trial duration, latency, transition counts, fixation duration, and normalized transition features.

- Observed a progression in balanced accuracy from ~0.76 with GLM to ~0.78 with ctree and ~0.86 with optimized PrInDT-based trees.

- Identified stable interpretable split variables including total trial duration, latency, and TN_F5-style transition features.

- Positioned the analysis as a research-oriented interpretable ML workflow rather than a raw-data public benchmark.

Figures